Industry Collaboration

Usability evaluation of Google Cloud’s Vertex AI

Industry

AI, Emerging tech

Duration

10 weeks

Role

UX Researcher

Skills

Usability testing, quantitative & qualitative research

Context

What is Vertex AI?

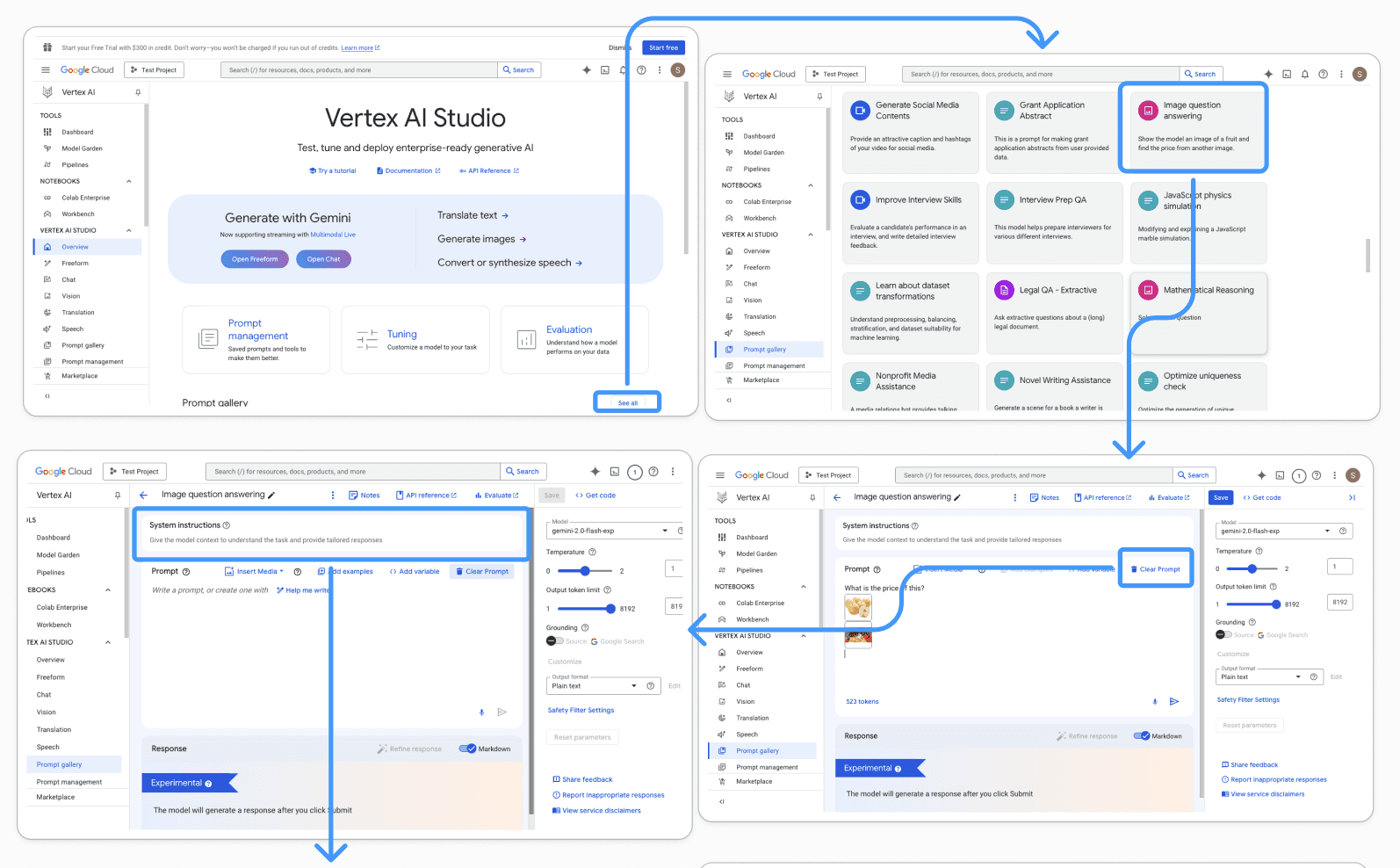

Vertex AI is a cloud-based machine-learning development platform for building and using AI models. It is designed for enterprise developers to build and deploy AI models and applications at scale. Vertex AI loginless experience is a free way to interact with Gemini models in Vertex AI studio.

Challenge

Google wanted to identify usability challenges and uncover opportunities to improve user retention for the Vertex AI platform.

They wanted us to conduct a usability study which would assess the loginless web experience of Google Vertex AI Studio, focusing on how developers navigate and engage with its features.

Solution

What did we do?

As part of the HCDE Usability Studies course, I collaborated with a team of students and Google to conduct a usability test on Vertex AI. We were supported by a talented Google UX research manager and our knowledgeable professor.

What methods were involved?

The study was conducted over 10 weeks, involving 8 participants → including students and professional developers familiar with AI application development who were first time users of Vertex AI.

Through 60-minute in-person moderated sessions on the University of Washington campus, participants completed tasks while thinking aloud. We used audio and video recording equipment to document participant reactions and website navigation. Additionally, questionnaires were administered at the completion of each task and after testing to obtain supplemental quantitative data.

This study focused on assessing 6 tasks that attempted to replicate the initial user journey, from accessing the landing page to getting code for the AI model.

Impact

🤩

🤩

Identified and presented 18 actionable insights to Google’s Vertex AI team

Delivered 2 additional usability issues based on qualitative feedback to improve the overall Vertex AI user experience

My role

... as a UX researcher from soup to nuts

I had the opportunity to collaborate with Google and a passionate team, where I actively contributed to creating the study plan, supported participant recruitment, moderated usability tests, captured detailed qualitative notes, and analyzed both qualitative and quantitative data.

Research questions

🧐

🧐

🧐

Is the loginless experience usable and satisfying?

What are the major frictions?

Does the loginless experience provide enough capabilities to engage and entice new users to sign up for more access?

Initial research

In order to familiarize ourselves with the tool, we conducted a heuristic evaluation.

Before recruiting participants, we conducted a cognitive walkthrough to better understand how developers would navigate the platform. We also spent time familiarizing ourselves with the relevant jargon and the fundamentals of how LLMs work.

Participant recruitment

We recruited 8 participants who had experience in AI, ML, or LLM development.

We used convenience sampling by reaching out to our personal network and 500+ computer science students through the University of Washington directories. We distributed screening surveys to all participants, excluding those with prior experience using the Vertex AI platform and those who were only available remotely.

Our inclusion criteria focused on recruiting participants who were familiar with programming languages and had experience using AI to build applications. To ensure consistency and accuracy of the data, participants were also required to attend sessions in person at the University of Washington.

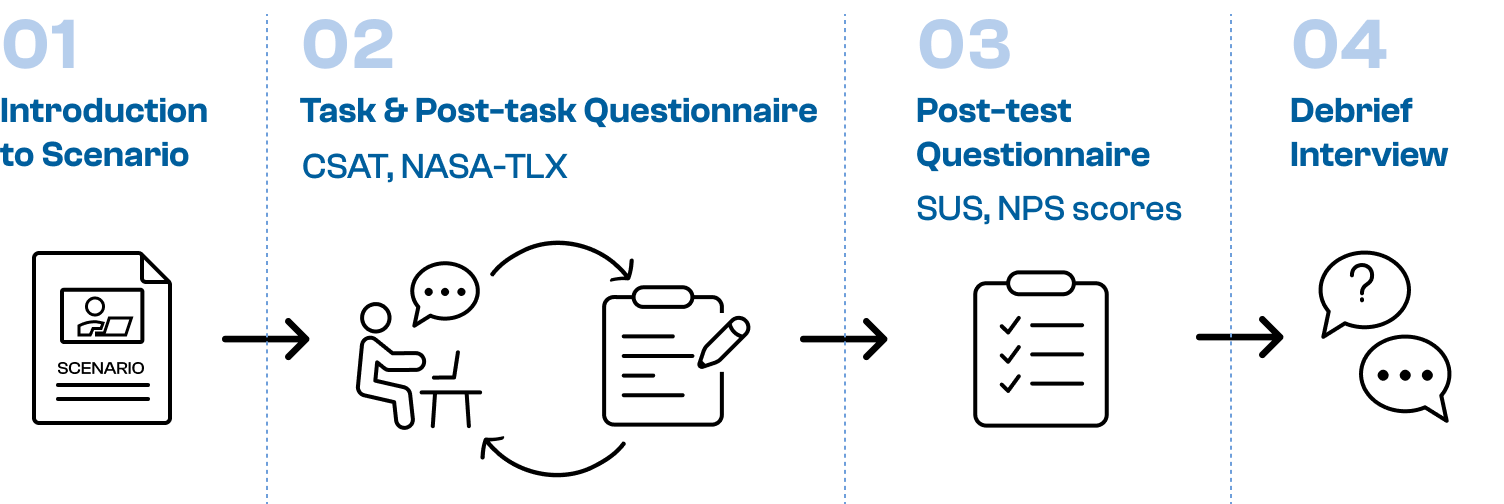

Testing procedure

We conducted in-person usability sessions in isolated study rooms on the UW campus.

Each session began with reading a standard scenario, followed by a set of tasks created specifically for each participant. Before starting, we obtained written consent through a signed consent form. A team of four facilitated each session: one moderator, one note-taker for qualitative insights, one timekeeper for task completion, and one observer tracking clicks to success and identifying click-path errors.

After each task, participants completed a post-task questionnaire to provide quantitative scores based on standard NASA-TLX and CSAT questions and qualitative feedback on their frustrations or satisfaction with the task. At the end of the test, participants filled out a post-test questionnaire based on standard SUS and NPS questions, summarizing their overall experience with Vertex AI after completing all the tasks. This allowed us to gather comprehensive insights into their interaction with the tool.

Data analysis

After collecting the data, we conducted an affinity mapping exercise on the qualitative notes (gathered from mid-test observations, interviews, post-task feedback, and post-test responses) to identify recurring patterns and themes.

We reviewed the session recordings and extracted quotes for each task that directly highlighted issues with Vertex AI. To ensure a comprehensive analysis, we included quotes from a diverse range of participants, which allowed us to corroborate our findings and identify common usability challenges.

We tracked the time to completion for each task and recorded the number of clicks in corresponding tables, comparing these metrics to our predefined benchmarks and expectations. This comparison helped us link the qualitative insights with the quantitative data, strengthening the credibility of our findings.

The post-task and post-test questionnaires were analyzed to create charts that reflected participant satisfaction (CSAT), overall usability (SUS), and perceived workload (NASA-TLX). These charts provided a clear visual representation of participants' experiences and further informed our understanding of Vertex AI's usability.

This quantitative data is under NDA, but it illustrates how we chose to visually represent CSAT, NASA-TLX, and SUS data

Findings

We presented 18 actionable insights to the Google UX research team based on the following categories:

1. Task based findings

We organized the results of our usability tests and questionnaires based on the tasks through which they were identified. We incorporated user quotes drawn from qualitative findings and rated the severity of each finding on a 4-point scale, sourced from the Handbook of Usability Testing by Jeffrey Rubin.

2. Significant non-task findings

These issues emerged outside the defined tasks but provided valuable insight into our research question on user retention.

3. Quantitative metrics

We conducted SUS, NPS, CSAT tests to support our qualitative findings.

Reflection

Key learnings

I learned that testing with diverse expertise levels was essential. The issues faced by beginners versus advanced users were different, and understanding both perspectives helped us prioritize recommendations that would benefit the broadest user base without limiting power users.

Triangulation of methods allowed us to gain comprehensive insights. Heuristic evaluation identified systemic issues, while qualitative interviews and quantitative analysis revealed how these issues manifested in real workflows.

If I did this again…

Eye tracking software: our team wanted to incorporate eye tracking to aid our quantitative data, however we stopped due to technical constraints. I believe the inclusion of this data would help us identify visual heat maps and further validate usability recommendations

Increase sample size: I would want to recruit larger samples to improve generalizability and explore stratified sampling approaches (e.g., level of experience) to gain better insights into differences in perceptions between subgroups.

Standardize moderation procedures: I would recommend having a single moderator and conducting multiple dry runs before initial testing in order to standardize procedures.